The grounds have shifted the foundations of academic core facilities and the current climate demands their strategic agility in order to thrive. Boyd Butler at Molecular Devices reveals how these labs can capitalise on this opportunity to increase value and efficiency.

Academic core laboratories are at an interesting inflection point. Once considered subsidised institutional necessities, these facilities now function more like independent service centres – expected to justify their existence through billable hours, demonstrate return on investment (ROI) and continuously expand their capabilities. Yet they face a key constraint: accomplishing this without increasing headcount.

Having spent decades in academic core facility management before transitioning to industry, I’ve seen this transformation firsthand. The question is no longer whether cores can adapt, but how they can thrive. The answer lies in rethinking operational models – focusing on strategic automation, intelligent instrument selection and user empowerment.

The changing landscape of core facilities

A decade ago, core facilities operated on the understanding that universities absorbed operational deficits as part of their institutional mission. Service contracts, equipment maintenance and staffing shortages were subsidised by institutional support or indirect costs from grants.

That era is over. Today, core facilities face tightening federal research funding, shrinking indirect cost pools and increasing competition, with some institutions now operating multiple cores in the same field, such as Harvard’s seven imaging cores. As a result, core facilities must now justify their existence through billable hours, cover service contracts themselves and prove ROI.

This shift has fundamentally changed how cores operate. Directors must now weigh every decision through a lens of financial sustainability, selecting instruments that ensure high reliability, ease of use and scalability.

Time: the ultimate constraint

Ask any core facility manager about their greatest constraint and the answer is universal: time. A typical day for core staff involves keeping instruments operational, responding to troubleshooting emails, training new users, running samples for customers, managing data and supporting scientific applications.

The mathematics are unforgiving. Based on my experience, around 10 percent of a core’s user base consumes about 70 percent of staff time. These users often have complex sample preparation needs or limited technical backgrounds, requiring a high level of support. When an instrument breaks down, all other work stops. If a key staff member leaves, months, even years, of institutional knowledge can disappear overnight.

This time constraint is palpable. Staff stretched thin have less capacity for proactive user training, which leads to more troubleshooting requests. Solving this requires strategic decisions about instruments, workflows and staff priorities.

Strategic instrument selection

One of the most impactful ways to scale operational excellence is through smarter instrument selection. The most expensive instrument isn’t always the most valuable; the most strategic one is.

During my time managing cores, I’ve made both excellent and regrettable purchasing decisions. The pattern is clear: instruments designed solely for cutting-edge applications or power users often become ‘expensive paperweights’.

When evaluating potential purchases, core directors must therefore consider:

- reliability and uptime: instruments with low downtime reduce staff workload and ensure consistent access for users

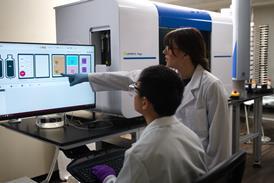

- training scalability: instruments that are intuitive and easy to learn enable faster onboarding and user independence. Some systems take months to master, while others allow users to become proficient in days.

- workflow automation: instruments capable of saving and reproducing standardised protocols reduce staff involvement in repetitive tasks

- flexibility within simplicity: the best instruments serve both novice users with standardised needs and power users seeking advanced capabilities

- data consistency: instruments that deliver reproducible results minimise troubleshooting and ensure reliable data quality.

By focusing on these criteria, core facilities can ensure their instrument portfolio maximises both scientific impact and operational efficiency.

Empowering users through intelligent workflows

The concept of automation in core facilities often conjures images of robotic systems. While true automation can be valuable, the real opportunity lies in workflow automation, creating standardised, repeatable protocols that users can execute independently while preserving experimental rigour.

Consider a new graduate student using a microscope for the first time. Traditional approaches require extensive one-on-one training, repeated troubleshooting and ongoing support. The core staff essentially serves as technical consultants for every experiment.

Intelligent workflows change this dynamic. Initial training focuses on experimental logic and sample preparation, while the software guides users through standardised protocols to ensure consistent execution. Once trained, users can operate independently, booking instrument time and generating reliable data without constant supervision.

This shift has profound implications. Staff time previously spent on repetitive training can be redirected towards higher-value activities, such as developing new applications, consulting on experimental strategy and design, and supporting data analysis. These activities not only improve the user experience and satisfaction but also elevate the strategic value and sustainability of the core facility.

Addressing institutional knowledge

Most core facilities rely on deeply experienced staff who understand their instruments, workflows and edge cases in exceptional detail. This institutional knowledge is a significant strength, but when it resides primarily with individuals, it also creates risk and vulnerability. Staff turnover, increasingly common in academic environments, can result in the loss of critical operational expertise. When this happens, facilities often face disruption, retraining burdens and diminished service quality.

The solution is not replacing expertise but codifying it into systems. Instruments with intuitive interfaces and embedded workflows can preserve operational expertise and reduce reliance on individual staff members. By making institutional knowledge accessible to everyone, cores can mitigate the risks associated with turnover.

The service evolution

To remain relevant and sustainable, modern core facilities must embrace a service industry mindset. This doesn’t imply servitude but rather reflects the reality that user experience directly influences utilisation, funding justification and long-term success.

This evolution manifests in several ways:

- Proactive marketing: successful cores actively promote their capabilities. This includes hosting seminars, lunch-and-learns, and free demonstrations to attract new users.

- User engagement: regular communication with users helps core staff anticipate needs and adapt services. Directors must treat their users as customers whose needs drive strategic decisions.

- Flexible revenue models: tiered pricing structures – such as premium rates for staff-assisted use and lower rates for self-service – can optimise both staff time and revenue.

- Customer journey mapping: understanding the end-to-end user experience, from initial inquiry to data analysis, helps cores identify and eliminate pain points.

Cores that fail to adopt this mindset risk losing users to other facilities, both on campus and beyond.

The role of AI and machine learning

Artificial intelligence (AI) and machine learning (ML) represent significant opportunities for core facilities. Their value lies in augmenting human expertise – not replacing it.

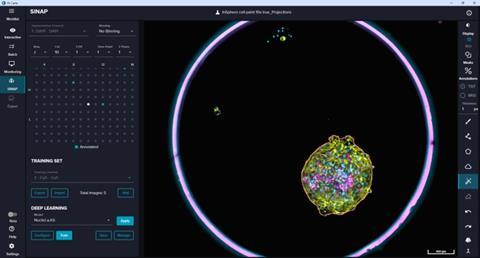

Today, AI is already improving segmentation accuracy, enhancing image analysis and reducing risks associated with limited sample sizes. These capabilities decrease the burden of time-consuming manual analysis while enabling users to perform more advanced workflows with greater consistency and confidence.

Looking ahead, AI-assisted acquisition software may guide users through optimised protocols, provide real-time quality control feedback and even suggest alternative experimental approaches. This will further democratise access to advanced techniques, enabling novice users to achieve results without mastering the underlying complexity.

Far from making core staff obsolete, AI will free them to focus on what machines cannot: troubleshooting sample preparation, consulting on experimental design and acting as true scientific partners to their user communities.

In this model, AI is not replacing expertise but amplifying it. Core facilities become more scalable, more resilient and more impactful, while preserving the scientific judgment that only experienced staff can provide.

Financial realities and ROI

The economics of core facilities has fundamentally shifted. When I started in this field 25 years ago, universities subsidised cores. Today, most cores are expected to recover operating costs primarily through user fees. This reality introduces both risk and opportunity.

Instruments that are heavily utilised can generate enough revenue to cover service contracts and justify additional purchases. Many cores use tiered pricing models, which incentivises user independence while maintaining a premium option for those who need it. When utilisation reaches capacity, they can justify the purchase of additional instruments.

Conversely, low-use instruments become liabilities. Million-dollar systems can sit idle because they are too complex for the average user or require constant staff involvement. These ‘paperweights’ tie up capital and space without generating sufficient ROI.

The strategic imperative is to select instruments that balance capability with usability. By monitoring utilisation metrics and aligning purchasing decisions with actual user needs, core facilities can ensure their investments drive sustainable revenue, efficient operations and long-term growth.

A roadmap for the future

Core facilities that will thrive in this new environment share common characteristics:

- Strategic agility: they continuously evaluate their instrument portfolio, retiring underused systems and investing in equipment that serves broad user bases effectively

- User empowerment: they prioritise training and workflows that enable user independence, optimising staff time for higher-value activities

- Service excellence: they actively market their capabilities, adapt to user feedback and embrace a customer-focused mindset

- Knowledge preservation: they implement systems that codify institutional knowledge, reducing reliance on individual staff members

- Financial discipline: they rigourously track utilisation metrics and make data-driven decisions about equipment purchases and staffing.

The cores that struggle will be those that cling to outdated operational models, fail to adapt to changing user expectations, or prioritise scientific novelty over operational fit.

Conclusion

The future of academic core facilities need not be one of perpetual constraint. Through deliberate and strategic instrument selection, workflow automation and embracing a service-oriented approach, cores can actively shape the evolution of shared research infrastructure or allow change to be imposed externally.

The question is no longer whether core facilities must adapt, but whether they will lead that transformation. Those that act decisively today will establish the blueprint for sustainable, high impact core operations in the years ahead. In an era of constrained resources, leadership, not funding, will determine which core facilities thrive.

No comments yet