The key to faster, smarter drug discovery lies in data that’s often overlooked. By exposing hidden delays and inefficiencies, this data enables teams to shorten discovery cycles and progress promising candidates faster.

Why minimising cycle times matters

Almost every step of the drug discovery process relies on cycles of learning – a common example being designing, making, testing and analysing candidate molecules, known as the DMTA cycle – though there are numerous other cyclic processes on the road from target discovery to clinical development. In every case, each cycle builds on the insights of the previous one. For example, in assay development, the focus is on producing an assay that is relevant (hitting the right target), reliable and reproducible, practical (feasible and cost-effective) and produces good quality data. To achieve this requires multiple iterations of development, with each iteration being informed by data of previous rounds. Thus, a delay at any stage will quickly lead to bottlenecks in the discovery process.

The same principles are true of lead optimisation where each round of synthesis will be informed by data from the latest activity profiles. Given that compound synthesis frequently outpaces testing, reducing testing cycle times will positively impact the overall cycle time. Failure to do so will increase the risk of costly synthesis based on incomplete or outdated data – with an increased likelihood of pursuing ineffective compounds.

To avoid investment of time and money in the wrong product, it is wise to make timely decisions about failing a molecule or assay – hence the adage ‘fast to fail’ – in order to divert investment to a more promising candidate.

The importance of operational data

Understanding where savings can be made in cycle times relies on access to accurate operational data, ie, information generated by the day-to-day processes and activities of the drug discovery workflow – outside of the perhaps more obvious data such as metadata of the input materials and the experimental results. Some examples include:

- Timestamped audit event data – such as records of when a molecule or material moves through different stages of a screening cascade, such as when samples are retrieved from storage, when an assay plate is prepared, when samples are shipped or received, or assay results received

- Material availability data – information about which samples are available and accessible for use – vital information when planning an experiment or screening campaign

- Information about the physical items used in the process: eg, what specific liquid handling machine was used for an aliquoting operation and the exact types of tube or plate labware used at each stage of a sample’s processing

- Information about the environmental conditions at the time of the experiments: eg, temperature and humidity. This may be even more relevant when combined with information about how long samples were exposed to these conditions, such as the time they were out of storage and on the deck of a liquid handler.

The data enable cycle time information to be derived: metrics that track how long it takes for a molecule to move through different stages of the drug discovery process, helping to identify bottlenecks and inefficiencies. For example, understanding the breakdown of the time taken from placing a request for an assay plate to when the assay plate was physically created.

Operational data deserves greater attention as it holds the key to reducing cycle times in drug discovery.

Such operational data are often overlooked in software systems where the greatest focus may be on what goes in (source materials and their metadata) and what comes out (assay results) – with far less focus on what happens in between. However, operational data deserves greater attention as it holds the key to reducing cycle times in drug discovery.

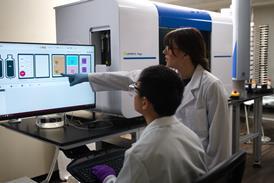

What are the sources of operational data? Some will be captured from manual processes such as sample registration, the placing of a request by a scientist, or when experimental data are recorded in an electronic lab notebook. However, more comprehensive and larger sources of data are likely to be captured from automation platforms.

Automation is increasingly being adopted for accelerating drug discovery, improving data quality and reducing bottlenecks, largely being considered essential to remain competitive. Automated liquid handling systems are relied upon to significantly boost throughput and accuracy with long, unattended workflows and precision pipetting, reducing manual errors and improving reproducibility. Automated storage systems may be implemented to support the sample throughput required in vast screening campaigns in large pharmaceutical R&D organisations or to secure the cold chain of valuable samples and improve sample integrity and compliance. The good news is such automated systems excel at logging event data with accurate timestamps.

Automated liquid handling systems generate prodigious amounts of data, including:

- Protocol start and completion – timestamped records of when a run begins and ends

- Transfer and dispense events – logged per well or plate, including source and destination labware wells, transfer volumes and dilution factors

- Tip usage and tip changes

- Labware placement: events logging where labware and reagents were placed on the deck or carousel

- Transfer time metrics – including average transfer time and active time per machine, used for throughput analysis

- Non-routine events such as error and pause events – when a run is interrupted or fails.

These can be used to derive metrics including transfers per machine per hour, enabling insights into whether efficient use is being made of all the automation at your disposal.

Automated storage systems capture detailed timestamped data on the following:

- Item placement and retrieval – ie, when a barcoded labware item enters or is retrieved from the store

- How long an item is held in the output buffer of a store before retrieval to the lab

- The precise x-y location of tubes being placed into a barcoded rack by the store

- Other non-routine events such as failure to retrieve samples.

These data are linked to unique sample barcodes and may include data such as the user or system that initiated the operation. Additional metrics can be derived from these data, including picks per store per hour to understand utilisation of the store and, if necessary, to implement picking optimisation strategies.

Data-driven cycle time reduction

How can this wealth of operational data be put to work in cycle time reduction? Many data science teams have successfully combined information from fragmented data systems and overlaid this with dashboards that visualise the timeline of a discovery workflow. For example, a dashboard visualised the progress of molecules from assay request through to assay results, showing when each was submitted, plated and assayed. By drilling down into these event data, it is easy to see where bottlenecks occur, allowing early intervention to mitigate delays, thus reducing overall cycle time. Using a scenario of samples taking an excessive time to reach assay plates as an example, the root causes may include:

- Sources in incompatible vial types for the automation platforms

- Missing source samples for the planned experiment due to errors in inventory management

- Timing of the request with regard to resource availability such as weekends or holidays

- Delays in the shipping of plates or samples where these are managed by an external CRO.

The ability to see where delays occur makes it easy to build strategies to minimise them.

A key challenge in making sense of operational data is integrating data from multiple systems and sources – this can extend to shipping data systems when third-party CROs are part of the workflow. Not all systems are equipped for this integration and the lack of comprehensive timestamps in some platforms can be a challenge. Fortunately, there is greater awareness of the value of such data, with software and hardware vendors increasingly providing comprehensive APIs to support access to it.

There is a broader impact of using operational data. By making cycle times transparent, organisations can benchmark performance, justify investments in laboratory automation and foster a culture of continuous improvement. The availability of these data empowers teams to find ways to streamline workflows to accelerate the delivery of new therapies, rather than retrospectively identifying problems.

Building for the future: AI and data lakes

Harnessing operational data as a foundation, there is also potential to incorporate other discovery data – such as historical experimental results data and reagent and sample batch information – and apply the power of artificial intelligence/machine learning (AI/ML). These technologies have the capability to make sense of the sheer load and complexity of such data to produce further insights that would otherwise be out of reach. For example, AI can analyse historical experimental results and combine these with operational data to assist in predicting which assay designs, plate configurations and automation platforms will yield the most efficient outcomes, thus saving time and resources. Many drug discovery organisations are starting to implement data lakes as the central enabling infrastructure that will underpin integrated analytics and AI/ML. Availability of managed services from many big-name cloud-infrastructure providers makes building such a data infrastructure increasingly viable. Software vendors are embracing these technologies to generate greater value and insights from data and platforms. Certainly when implementing or replacing software systems, there should be careful consideration to ensure that critical data are captured and accessible. Choosing providers who actively partner with other vendors to support integrations among their platforms will provide an increased chance of success of delivering the desired outcome.

Conclusion

Minimising cycle times in drug discovery is critical as each iterative process builds on prior insights, with delays causing bottlenecks that impact the entire workflow. Operational data, often overlooked, is essential for identifying inefficiencies and enabling faster decision-making.

Operational data is key, yet is often overlooked. Combining data from multiple systems is possible with modern APIs and vendor partnerships to improve integration. Automation platforms are a major source of these data, which can be captured and used to improve operational efficiency.

Combining operational data with other sources of discovery data will enable AI/ML to design efficient experimental designs and workflows, supported by data lakes and cloud services, enhancing decision-making and accelerating therapy development.

Cycle time reduction is not just operational, it’s strategic. Faster turnaround at every stage of the drug discovery process enables scientists to make timely decisions about compound progression, assay prioritisation and resource deployment. This is crucial in the highly competitive environment of drug discovery.

Bibliography

- Winchester T. The Importance of Data Flow in the Drug Discovery Process [Internet]. Titian.co.uk. Titian Software; 2018 [cited 2025 Nov 3]. Available from: https://www.titian.co.uk/blog/the-importance-of-data-flow-in-the-drug-discovery-process

- Gaviria R, Ahlbrech J. (2025, September 23–24) Tracking the journey of a molecule using operational data [Conference presentation]. wega Sample Management Symposium 2025, Basel, Switzerland.

- Dambman D. (2025, September 23–24) Triumphs and challenges in lab automation & orchestration: past progress, present hurdles, and the road ahead [Conference presentation]. wega Sample Management Symposium 2025, Basel, Switzerland.